Machine downtime analysis: from root cause to corrective action

Every plant has that one machine. The one where the shift lead shrugs and says, "It just does that sometimes." The stops are frequent enough to be frustrating but inconsistent enough that nobody can pin down the cause. The result? Lost hours, missed schedules, and a spreadsheet full of vague downtime codes that don't tell you anything useful.

If that sounds familiar, it's common. The gap between knowing that a machine stopped and understanding why it stopped is where most manufacturing operations lose the thread on machine downtime analysis. This guide lays out a practical framework for closing that gap, from structured root cause work to corrective actions that hold.

Key terms worth defining upfront

Before we get into it, let's define a few terms that come up throughout this article. These aren't textbook definitions; they're what these concepts mean on the floor.

Term | What it means in practice |

|---|---|

OEE (Overall Equipment Effectiveness) | Availability x Performance x Quality. A single percentage that shows how much of your theoretical maximum output you're actually capturing |

Availability | The percentage of planned production time the machine is actually running, excluding unplanned stops and changeovers |

Performance | How close your actual cycle time is to your ideal cycle time. Captures speed losses that are often invisible |

Quality | Good parts divided by total parts. Reflects scrap, rework, and startup rejects |

MTBF (Mean Time Between Failures) | Total uptime divided by the number of failures. A reliability metric for maintenance planning |

MTTR (Mean Time To Repair) | Total repair time divided by number of repairs. Tells you how efficient your maintenance response is |

The important distinction: uptime only tells you whether the machine ran. OEE measurement tells you whether it ran well. Most plants can quote their uptime rate, but performance and quality losses are harder to see without structured data capture.

Why your downtime data looks different across identical machines

Here's a scenario continuous improvement leaders know well: two identical injection molding presses, same product, same shift, and their downtime reports look completely different. One logs "mechanical failure" while the other logs "unplanned stop."

The problem isn't the machines. It's the classification system. When operators manually categorize stops at the end of a shift, recall bias, inconsistent terminology, and time pressure corrupt the data. One operator's "material jam" is another's "feed issue." Trend analysis fails before it starts.

This is exactly why downtime tracking software exists. Standardized, guided loss categories, captured in the moment rather than hours later, transform messy data into something you can actually act on. The goal isn't perfect data from day one. It's consistent data that improves over time.

A practical starting taxonomy looks like this:

Equipment failure (mechanical, electrical, pneumatic, hydraulic)

Setup and changeover

Material and supply issues

Operator or process-related stops

Planned maintenance or cleaning

Each category should have 3-5 subcategories relevant to your equipment. A stamping press needs different subcategories than a packaging line, so make sure the system lets you customize.

The five downtime archetypes every plant recognizes

Look across enough stop events, and clear patterns emerge. These aren't abstract concepts; they're patterns your maintenance team will recognize right away.

The chronic mechanic: Recurring equipment failures every 2-4 weeks. Spindle faults, pump seal leaks, motor burnout. MTTR is 1-3 hours each time. The fix is condition-based maintenance and a tighter inspection cadence.

The setup monster: Changeover times stretching to 45-90 minutes when they should take 20. Often normalized as "just how this machine works." A focused SMED (Single-Minute Exchange of Die) event typically cuts this by 30-50% within weeks.

The jam master: Random material feed issues, 1-3 times per shift. Usually blamed on "bad material" or "operator error." Real root cause is often sensor misalignment, feed mechanism wear, or insufficient clearance in tooling.

The drift king: Machine runs, but cycle time slowly creeps up 5-15% over a shift. Invisible without real-time cycle-time tracking. Caused by thermal expansion, tool wear, or coolant degradation. This is where OEE monitoring software earns its keep, because operators can't feel a 1.5-minute cycle-time drift on a 15-minute part.

The quality sneak: Reject rate climbs from 2% to 10% during a run, caught too late. Root causes include tolerance stack-up, material property drift, or measurement instrument error.

Where the biggest losses actually hide

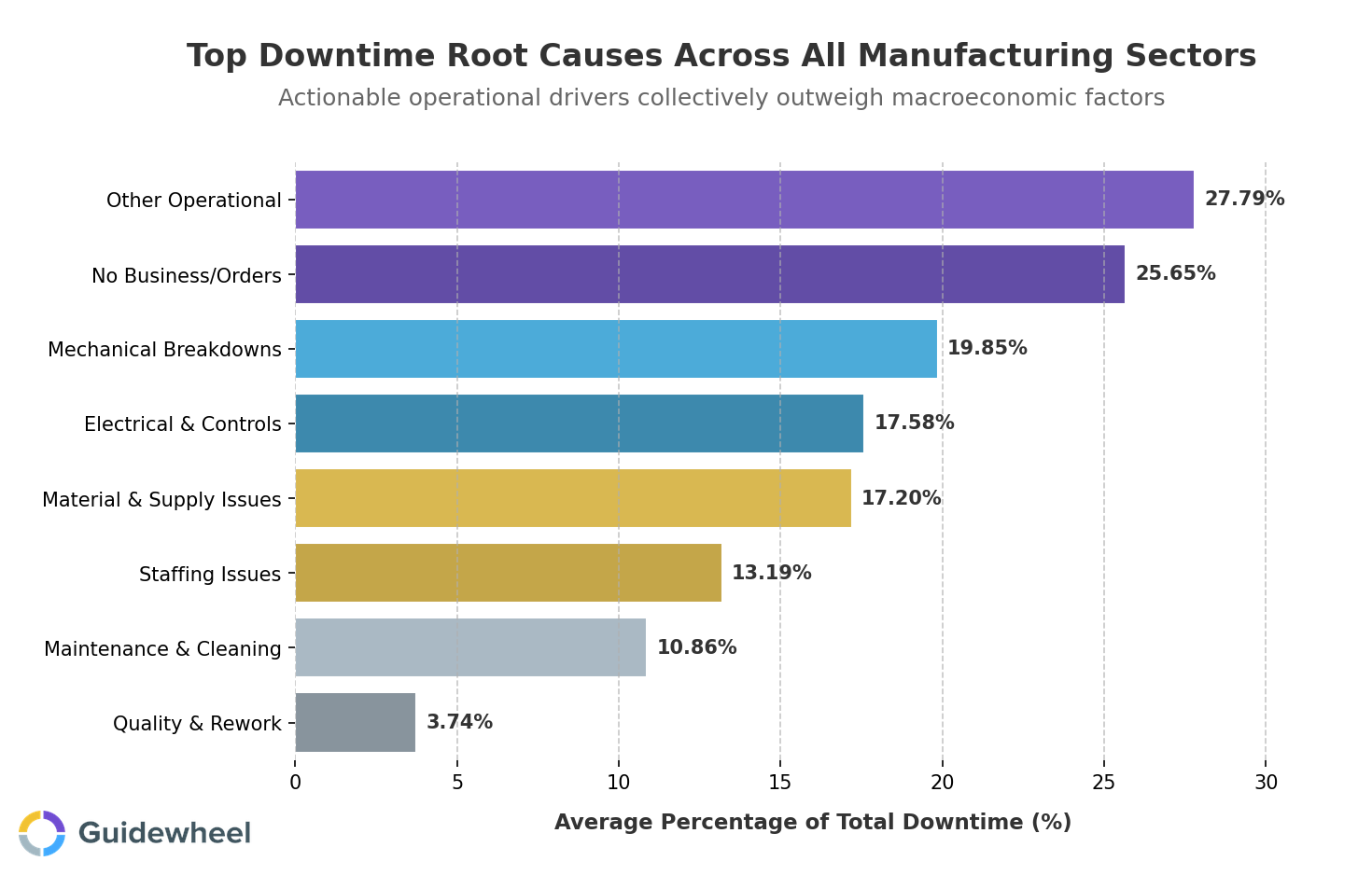

Performance analysis across manufacturing sectors reveals that the most actionable downtime drivers aren't always the most obvious ones.

According to Guidewheel Performance Analysis across 3,000+ machines, while "No Business/Orders" accounts for roughly 26% of downtime, the categories that plant teams can directly control tell a more compelling story:

Downtime category | % of total downtime | Avg. duration per event | Annual loss per line |

|---|---|---|---|

Other operational | 28% | 81 min | 266 hrs |

Mechanical breakdowns | 20% | 72 min | 91 hrs |

Electrical & controls | 18% | 107 min | 190 hrs |

Material & supply issues | 17% | 119 min | 334 hrs |

Staffing issues | 13% | 197 min | 423 hrs |

Maintenance & cleaning | 11% | 85 min | 154 hrs |

The key insight: operational, mechanical, electrical, and material issues collectively represent a much larger improvement opportunity than any single category alone. These are losses your team can address through targeted interventions, better spare parts strategy, standardized procedures, and structured machine downtime analysis.

Staffing-related stops deserve special attention. At nearly 200 minutes per event, they're among the longest duration stops in the dataset. Proactive shift scheduling, cross-training programs, and remote monitoring capabilities can help operations teams respond faster when coverage gaps appear.

How OEE calculation connects root cause to corrective action

Let's make this concrete. Say your CNC mill ran an 8-hour shift with a 30-minute planned changeover, giving you 7.5 hours of planned production time.

Actual runtime: 6.5 hours (lost 1 hour to a spindle fault and tool holder jam)

Cycle time: Theoretical 15 min, actual average 16.5 min

Output: 95 parts started, 93 good parts (2 rejects from tolerance drift)

The OEE calculation breaks down like this:

Component | Formula | Result |

|---|---|---|

Availability | 6.5 / 7.5 | 86.7% |

Performance | 15 / 16.5 | 90.9% |

Quality | 93 / 95 | 97.9% |

OEE | 86.7% x 90.9% x 97.9% | 77.1% |

The operator sees "6.5 hours of runtime" and thinks it was a decent shift. But OEE data reveals three separate loss streams. The 1-hour stop is obvious. The 1.5-minute cycle-time creep per part and 2 rejects? Those are invisible without structured data capture. That's the difference between uptime tracking and true OEE reporting.

What "good" looks like, and why context matters

There's no universal "good" OEE score. A job shop running high-mix, low-volume work on legacy CNC equipment operates in a fundamentally different context than a high-speed packaging line running the same SKU 24/7. These benchmarks serve as reference points, not rigid targets.

The chart above illustrates how runtime performance varies dramatically across sectors. In Plastics and Packaging, for example, the median runtime sits around 26% while the weighted average reaches 60%, revealing that a small number of high-volume machines heavily influence sector-wide numbers. Your facility's optimal performance depends on your specific equipment mix, product complexity, and operational priorities.

OEE range | What it typically indicates |

|---|---|

50-65% | Common starting point for plants just beginning to measure. Significant hidden losses, but also significant opportunity |

65-75% | Achievable within 6-12 months with structured downtime tracking and basic maintenance discipline |

75-85% | Standard for well-managed operations with production monitoring software and continuous improvement culture |

85%+ | Typically seen in high-volume, process-controlled environments. Diminishing returns above this threshold |

The honest truth: chasing 100% OEE is not a meaningful goal. Focus on closing the gap between where you are and your realistic next step. A 3-5% OEE improvement in year one is both achievable and significant.

From spreadsheets to a machine monitoring dashboard that drives action

The evolution most plants follow looks like this:

Manual logs and spreadsheets: Data is 8-24 hours old by the time anyone sees it. Categorization is inconsistent. Performance and quality losses are invisible. Supervisors spend 2-4 hours weekly compiling reports that nobody trusts

Basic SCADA plus spreadsheet reporting: Machine timestamps are accurate, but operators still manually select stop reasons. Quality and downtime data live in separate systems. Reporting is daily or weekly, too slow for intervention

Automated OEE tracking software with real-time dashboards: Stop events are detected automatically via sensors or power-draw changes. Operators categorize reasons in under 2 minutes via mobile app or touchscreen. Cycle-time drift and quality events are linked to specific shifts, operators, and parts

The jump from manual logs to automated OEE tracking doesn't require a massive IT project. Clip-on current sensors can read electrical current from any machine — legacy or brand-new — and run on cellular with no plant Wi-Fi required. Proprietary algorithms then translate power data into run/idle/down states, cycle times, and stop events without touching a single PLC. The biggest blocker to adopting better OEE software usually isn't cost — it's complexity. If deployment takes 6 months and requires a dedicated IT team, most mid-market plants never get there.

The jump from stage 1 to stage 3 doesn't require a massive IT project. Guidewheel's FactoryOps platform, for instance, uses simple clip-on current sensors that read electrical current from any machine — legacy or brand-new — and runs on cellular — no plant Wi-Fi required. The proprietary algorithms then translate that power data into run/idle/down states, cycle times, and stop events — all without touching a single PLC.

This matters because the biggest blocker to adopting better OEE software usually isn't cost. It's complexity. If deployment takes 6 months and requires a dedicated IT team, most mid-market plants never get there.

A practical 90-day action plan

Here's a realistic roadmap that respects the fact that you have production to run while you're improving how you measure it:

Timeline | Action | Expected outcome |

|---|---|---|

Weeks 1-4 | Deploy automated downtime capture on 2-3 target machines. Standardize your loss categories | Accurate baseline data flowing in days, not months |

Weeks 4-8 | Calculate baseline OEE. Identify your #1 downtime driver using Pareto analysis | Clear priority for your first improvement event |

Weeks 8-12 | Run a focused corrective action on the top loss driver. Track OEE impact weekly | 1-3% OEE gain from addressing a single root cause |

Months 4-6 | Expand to additional lines. Establish shift-level performance comparisons | Cross-shift accountability and a growing improvement culture |

Why does your downtime dashboard sometimes not match production count? Usually because manual counts miss micro-stops (under 5 minutes) and cycle-time variation. Automated capture closes this gap by logging every state change, not just the ones operators notice.

Building a downtime loss tree from machine state data starts with the stop event taxonomy described above, then layering in duration and frequency analysis. The best software for real-time Pareto of downtime causes will auto-generate this view, letting you see your top 3-5 loss drivers updated continuously rather than waiting for an end-of-week report.

Start turning stop events into throughput gains

Every stop event on your machines tells you something. The question is whether that information reaches the right people fast enough to drive corrective action. The path from reactive operations to structured machine downtime analysis isn't about perfection. It's about consistency, visibility, and a willingness to start with what you have.

If you're ready to move beyond spreadsheets and see your factory floor in real time, book a demo to see how Guidewheel's FactoryOps platform helps you find and recover the hidden capacity already sitting in your operation — starting with your toughest line.

With Guidewheel, we now get key metrics like production, downtime, downtime codes, scrap, and cycle time automatically and accurately. Our team no longer takes time to track manually and has been able to instead invest that time in improvements. Everybody knows when we're winning or losing. Each teammate understands how their work drives the success of the organization, and that every decision they make has a direct impact on the business.

Edgar Yerena, COO, Custom Engineered Wheels (via Guidewheel Customer Research)

Frequently asked questions

What is OEE and what does it actually measure?

OEE stands for Overall Equipment Effectiveness. It multiplies three components — Availability, Performance, and Quality — into a single percentage that represents how much of your theoretical maximum output you're actually producing. Unlike uptime alone, OEE captures speed losses and quality defects that are often invisible without structured data collection.

How do you calculate OEE in a manufacturing environment?

The formula is Availability x Performance x Quality. Availability equals actual runtime divided by planned production time. Performance equals ideal cycle time divided by actual cycle time. Quality equals good parts divided by total parts produced. You need three clean data streams to calculate it accurately: stop events, cycle times, and quality records.

What is considered a good OEE score?

It depends on your equipment type, product mix, and plant maturity. As a general reference, 65-75% indicates early maturity with structured tracking in place, 75-85% reflects well-managed operations with continuous improvement culture, and 85%+ is typically seen only in high-volume, highly automated environments. The goal isn't a specific number but consistent improvement from your baseline.

Can OEE be gamed, and how do you prevent it?

Yes, it can. Common tactics include excluding changeover time from planned production time, reclassifying unplanned stops as "planned," or loosening quality thresholds. The best defense is automated data capture that removes subjectivity, standardized definitions enforced across shifts, and regular audits comparing OEE trends to actual output.

How quickly can a manufacturer see ROI from real-time machine monitoring?

Most facilities begin seeing actionable insights within the first few weeks of deployment. According to Guidewheel Customer Research, measurable OEE improvements of 2–5% are common within the first 6 months when paired with structured root cause analysis and corrective action. Most facilities recoup their investment well within the first year when paired with structured corrective action — timelines vary based on baseline performance and production volume.

About the author

Lauren Dunford is the CEO and Co-Founder of Guidewheel, a FactoryOps platform that empowers factories to reach a sustainable peak of performance. A graduate of Stanford, she is a JOURNEY Fellow and World Economic Forum Tech Pioneer. Watch her TED Talk—the future isn't just coded, it's built.