Multi-plant machine monitoring: how to standardize uptime tracking and OEE across sites

If you run more than one plant, or even more than one line, you already know the frustration: Plant A reports 78% OEE, Plant B says 72%, and you have no idea whether that gap reflects a genuine performance difference or just two teams counting downtime differently. Fragmented data, inconsistent definitions, and 24-hour reporting delays make it nearly impossible to benchmark fairly, prioritize improvement, or hold anyone accountable to the same numbers.

The good news? You don't need a massive MES replacement to fix this. A practical machine monitoring approach, standardized OEE calculation, and a clear governance model can give you a trustworthy, plant-wide source of truth in weeks. Here's how.

Key terms to know before we dive in

If your team includes folks newer to these concepts, a quick alignment on definitions saves confusion later.

Term |

What it means in plain English |

|---|---|

OEE (Overall Equipment Effectiveness) |

A single percentage that combines three factors: was the machine available, did it run at full speed, and did it make good parts? |

Availability |

The share of scheduled production time the machine actually ran |

Performance |

How close the machine ran to its designed cycle time while it was running |

Quality |

The percentage of output that met spec on the first pass |

Uptime |

Time a machine spends actively running versus sitting idle or down |

Reason codes |

Standardized categories operators select to explain why a machine stopped |

Volume-weighted average |

An average that accounts for how much each machine or line actually produces, giving heavier weight to high-volume assets |

With those locked in, let's look at why standardization matters right now.

Why standardized uptime tracking matters more than ever

Rising labor costs, tighter delivery windows, and supply chain volatility mean every lost production hour hits your margin harder than it did five years ago. Industry estimates suggest unplanned downtime can cost $50,000 to $250,000+ per incident on a single production line, depending on your equipment and order commitments.

Yet most multi-site manufacturers still rely on shift-end summaries, manual spreadsheets, and inconsistent loss categories. Different plants define "planned downtime" differently. One site excludes changeovers from availability; another includes them. The result? You can't tell if a 72% OEE at Plant B is a real problem or just a math problem.

Standardizing how every site captures, categorizes, and calculates performance is the single fastest way to turn scattered numbers into decisions you can trust.

The OEE formula: get the math right first

Before you can benchmark anything, every plant needs to calculate OEE the same way. The standard formula is straightforward:

OEE (%) = Availability × Performance × Quality

Here's a quick worked example for a CNC machining center on an 8-hour shift:

Component |

Data |

Calculation |

Result |

|---|---|---|---|

Scheduled time |

480 min |

Baseline |

— |

Planned downtime (PM window) |

20 min |

Excluded from denominator |

— |

Unplanned downtime |

45 min |

Tool breakage + jam |

— |

Availability |

(480 − 20 − 45) / (480 − 20) |

415 / 460 |

90.2% |

Theoretical cycle time |

40 sec/part |

Design spec |

— |

Actual cycle time |

48 sec/part |

Measured (worn insert) |

— |

Performance |

40 / 48 |

— |

83.3% |

Good parts produced |

480 |

Measured |

— |

Defective parts |

18 |

Tool chatter marks |

— |

Quality |

(480 − 18) / 480 |

— |

96.3% |

OEE |

90.2% × 83.3% × 96.3% |

— |

72.3% |

The critical governance step: document, in writing, how every plant answers these questions:

Does "scheduled time" include breaks and shift handover?

Are changeovers planned or unplanned?

What is the validated theoretical cycle time for each product-line combination?

Does "defective" mean scrap only, or scrap plus rework?

When two plants answer those questions differently, you get phantom performance gaps that waste everyone's time.

The most common manufacturing downtime reasons, and where to focus

Most teams instinctively focus on catastrophic mechanical breakdowns. They're dramatic, visible, and easy to rally around. But the data tells a more nuanced story.

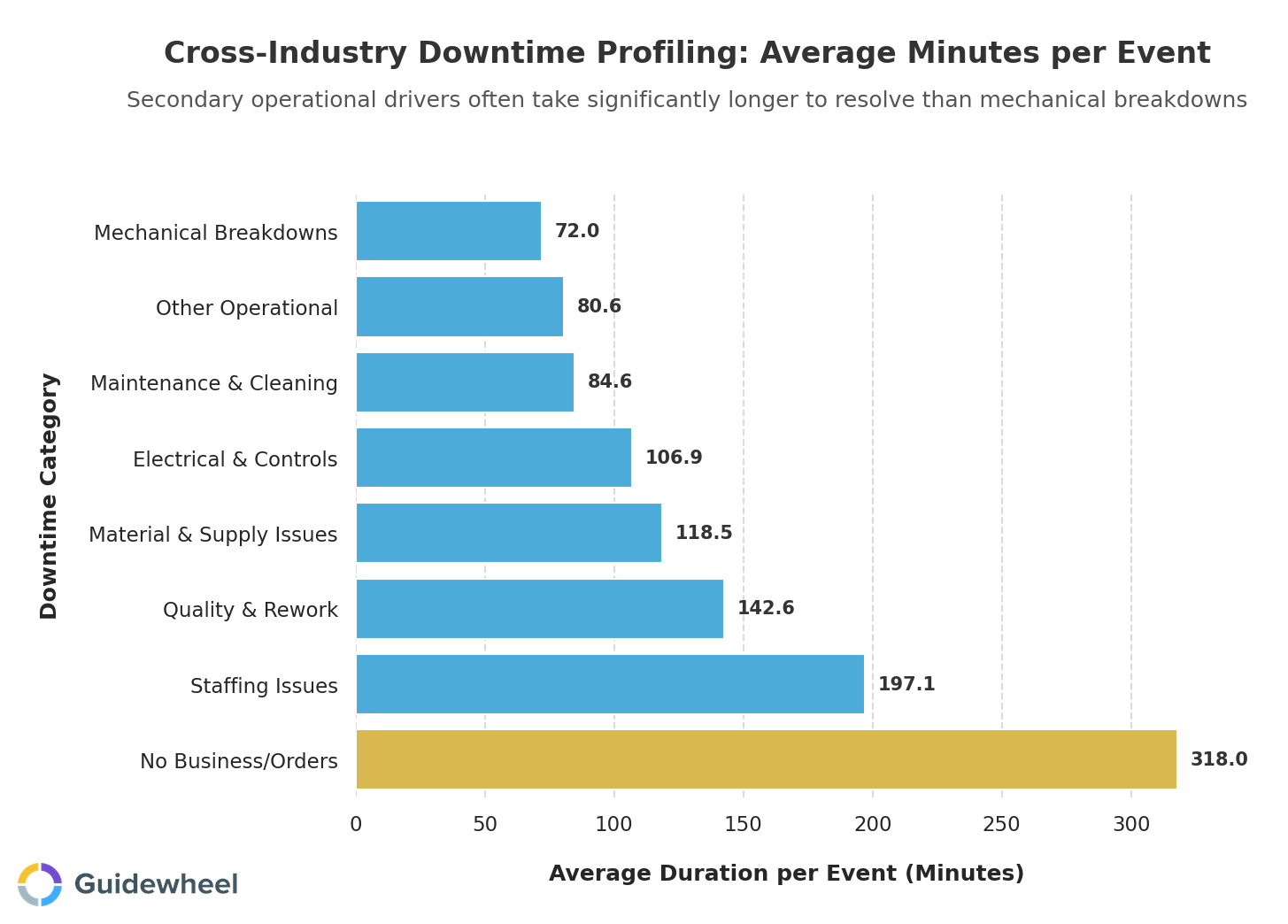

Analysis from Guidewheel's performance dataset (spanning 3,300+ downtime events across 1,100+ machines) shows that while mechanical breakdowns are frequent, several other categories consume far more time per event:

Staffing issues and material supply delays are often overlooked in favor of mechanical breakdowns, but they cause far more downtime per event — 197 minutes and 119 minutes respectively, compared to 72 minutes for mechanical failures. When prioritizing improvement efforts, focus on reducing the frequency and duration of these longer-duration categories first, as they typically offer the greatest return on invested time and resources.

The takeaway for plant leaders? Your top five controllable downtime categories deserve equal attention:

Downtime category |

Avg. duration per event |

Why it matters |

|---|---|---|

Staffing issues |

197 min |

When they hit, they're devastating; remote monitoring and staggered scheduling can help |

Material & supply delays |

119 min |

Upstream visibility and buffer stock strategies reduce these losses |

Mechanical breakdowns |

72 min |

Frequent but shorter; preventive maintenance schedules target these directly |

Maintenance & cleaning |

85 min |

Standardizing procedures across sites recovers significant planned time |

Other operational |

81 min |

A catch-all that signals your reason code framework needs refinement |

The best way to define downtime reasons across all plants is to start simple: pick your top 5 to 10 categories, train every shift to use the same dropdown list, and expand only after the system is stable. Free-text entries and vague labels like "machine issue" will undermine your data quality fast.

How machine monitoring builds a source of truth

Manual logging is where standardization goes to die. Operators are busy running production, not entering data. By shift-end, a 45-minute stoppage becomes "around 40 to 50 minutes," and the reason code is a best guess.

Automated production monitoring software solves this by capturing machine state, running versus stopped, directly from equipment signals. The operator's only job is to confirm a reason code from a short dropdown within the first few minutes of a stoppage. That's 10 seconds of input, not 10 minutes of paperwork.

For legacy machines without network-ready PLCs, solutions like Guidewheel use simple clip-on current sensors that read the machine's electrical signal, essentially its heartbeat, and work over cellular connections. No PLC reprogramming, no complex IT project. That current data is what lets the system turn a raw electrical signal into accurate run/stop/idle states and useful analytics.

This approach means you can get every machine, from a decades-old hydraulic press to a brand-new CNC mill, reporting into one unified OEE dashboard without a rip-and-replace project.

Why your multi-site benchmarks might be misleading

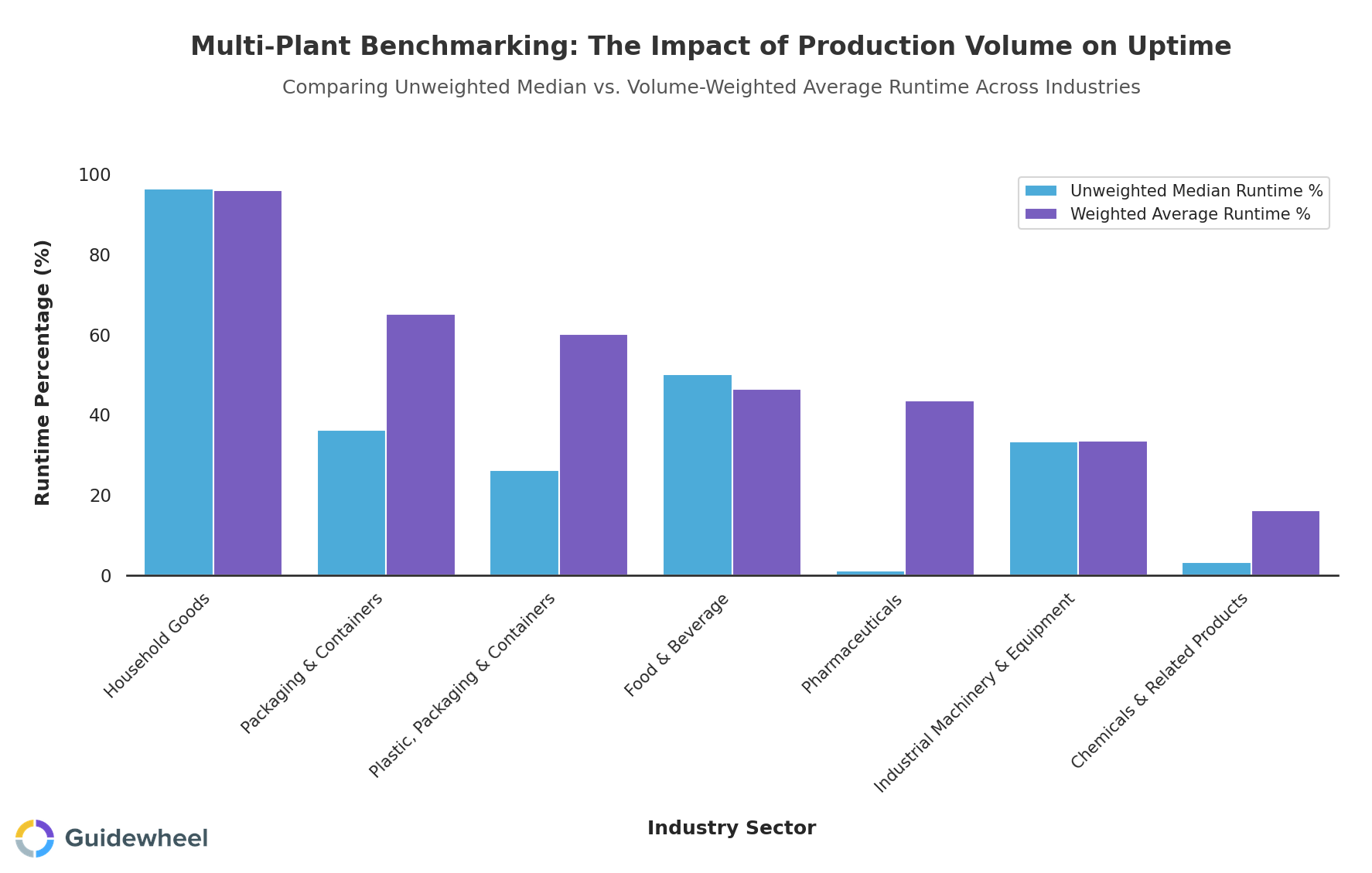

Here's a subtlety that trips up a lot of operations directors: simple averages lie when production volumes differ across sites or lines.

Consider this: if Plant A runs 3 high-volume injection molding machines at 80% uptime and Plant B has 20 low-utilization machines at 30% uptime, a simple average would suggest your fleet runs at about 35%. But volume-weighted, the picture shifts dramatically because Plant A's machines produce the vast majority of your output.

This chart shows the gap between unweighted median and volume-weighted uptime. In Pharmaceuticals, the median is just 1% while the weighted average hits 44%, because a handful of high-volume lines run around the clock while many others sit idle by design (Source: Guidewheel Performance Analysis).

To create a plant-to-plant benchmark that's fair, you need to weight by production volume and compare like equipment to like equipment. A packaging line and a CNC machining center have fundamentally different uptime profiles, and neither is "wrong."

Five OEE implementation pitfalls to avoid

Knowing the formula is easy. Sustaining a reliable OEE implementation across sites is where most teams stumble.

Pitfall |

What goes wrong |

The fix |

|---|---|---|

Inconsistent definitions |

Plant A excludes changeovers from availability; Plant B includes them |

Publish a 1-page OEE definition sheet; audit quarterly |

Manual data burden |

Operators skip logging when production pressure is high |

Automate state capture; limit manual input to reason code confirmation |

OEE as a scorecard only |

Leadership sees "68%" but can't drill into which loss type drives it |

Build an OEE-to-action workflow: deviation → drill-down → root cause → countermeasure |

No benchmarking context |

A plant hits 72% and declares victory with no peer comparison |

Publish monthly cross-site comparisons; identify best-performing lines and replicate practices |

Technology before process |

Software deployed, but no daily huddle, no escalation rules, no improvement cadence |

Design your operating model first: who reviews what, when, and what triggers action |

Each facility has unique operational requirements, so adapt these guardrails to your context. The goal isn't rigid uniformity; it's enough consistency that the numbers mean the same thing everywhere.

Start tracking what matters at every site

If you're running multiple lines or plants and still piecing together performance from spreadsheets and shift-end summaries, the path forward doesn't require a massive IT project. It starts with a shared definition of what "good" looks like, automated data capture at the machine level, and a simple operating rhythm that turns numbers into action.

The manufacturers that move fastest start small, prove value on a pilot line, and scale from there.

We had our best month of the year, increasing production from 26k-35k/month to 46k cases in March. I attribute this to Guidewheel. Being able to see downtime data and address downtime reasons directly correlates to higher production.

Michael Palmer, VP of Operations, Direct Pack via Guidewheel's Customer Research

Ready to build a standardized source of truth across your sites? Book a Demo to see how quickly you can move from fragmented data to clear, shared numbers.

Frequently asked questions

How do you calculate OEE correctly in a real manufacturing environment?

OEE is Availability multiplied by Performance multiplied by Quality. The key to accuracy is automated data capture for machine state and cycle time, combined with standardized definitions across all sites for what counts as scheduled time, planned downtime, and defective output. Manual spreadsheet calculations introduce delays and inconsistencies that erode trust in the numbers.

What is considered a good OEE score by industry?

Industry benchmarks suggest that established manufacturers typically operate between 75 and 85% OEE, while world-class operations reach 85 to 95%. Plants earlier in their monitoring journey often sit between 40 and 65%. These are reference points, not universal targets. Your optimal OEE depends on your product mix, equipment type, changeover frequency, and operational priorities.

How do you implement OEE without adding manual data entry burden to operators?

Automate as much as possible. Machine state (running, stopped, alarm) should be captured directly from equipment signals or current sensors, not typed into a form. The only manual step for operators should be confirming a reason code from a short dropdown list within the first few minutes of a stoppage. If it takes more than 10 seconds, adoption will suffer.

How should plants benchmark uptime and OEE across lines, shifts, and sites?

Use volume-weighted averages rather than simple averages to account for production volume differences. Compare like equipment to like equipment, since a packaging line and a CNC cell have inherently different uptime profiles. Publish monthly cross-site comparisons, identify your best-performing line or shift, and investigate what they're doing differently before assuming underperformers are doing something wrong.

What software capabilities matter most for production monitoring?

Prioritize automated machine state capture, configurable reason codes, OEE calculation with drill-down to shift and line level, multi-site aggregation, and API connectivity to your existing ERP or BI tools. Avoid platforms that require a full MES implementation or can't support your older equipment. The fastest path to value is a lightweight overlay that works on all your machines, legacy and new, without disrupting current workflows.

About the author

Lauren Dunford is the CEO and Co-Founder of Guidewheel, a FactoryOps platform that empowers factories to reach a sustainable peak of performance. A graduate of Stanford, she is a JOURNEY Fellow and World Economic Forum Tech Pioneer. Watch her TED Talk—the future isn't just coded, it's built.